80–120 kW GPU racks redefine physical layer risk. Cooling is engineered. Power is planned. But Layer 0—fiber cross connects and patch panels—still depends on manual moves in increasingly dense, warm halls.

Rack-scale systems now compress what used to be a cluster into a single cabinet footprint, including NVIDIA platforms built around 72 GPUs per rack. In practice, AI clusters fail at the patch panel before they fail at the GPU: one wrong cross connect can stall a synchronized job.

AI data center automation is the control that unlocks density safely. It turns high-density fiber management into a governed system—software addressable, logged, and repeatable—instead of a human bottleneck.

AI is pushing rack densities to ~100 kW today, with credible roadmaps beyond that, according to The Economic Times.

The physical layer feels the impact first, which is why AI data center automation has to start at the patch field:

A simple model shows why high-density fiber management becomes mandatory at 100kW rack connectivity.

| Scenario (example) | Fabric ports per rack | Duplex cross connects leaving rack | Strand count |

|---|---|---|---|

| 400G fabric, single rail | ~72 | ~72 | ~144 |

| 400G fabric, dual rail A/B | ~144 | ~144 | ~288 |

| 800G fabric, dual rail A/B | ~72 | ~72 | ~144 |

| Add storage + OOB | +40 to +80 | +40 to +80 | +80 to +160 |

Across XENOptics automated switching deployments, manual patching shows a 2.7% error rate versus 0.02% for automated workflows—an error profile that becomes unacceptable as cross connect counts climb.

At 100kW, high-density fiber management is a reliability requirement, not an optimization.

In synchronized training, the fabric is part of the machine. AI data center automation needs to reach Layer 0 because it's where small mistakes become cluster-wide stalls.

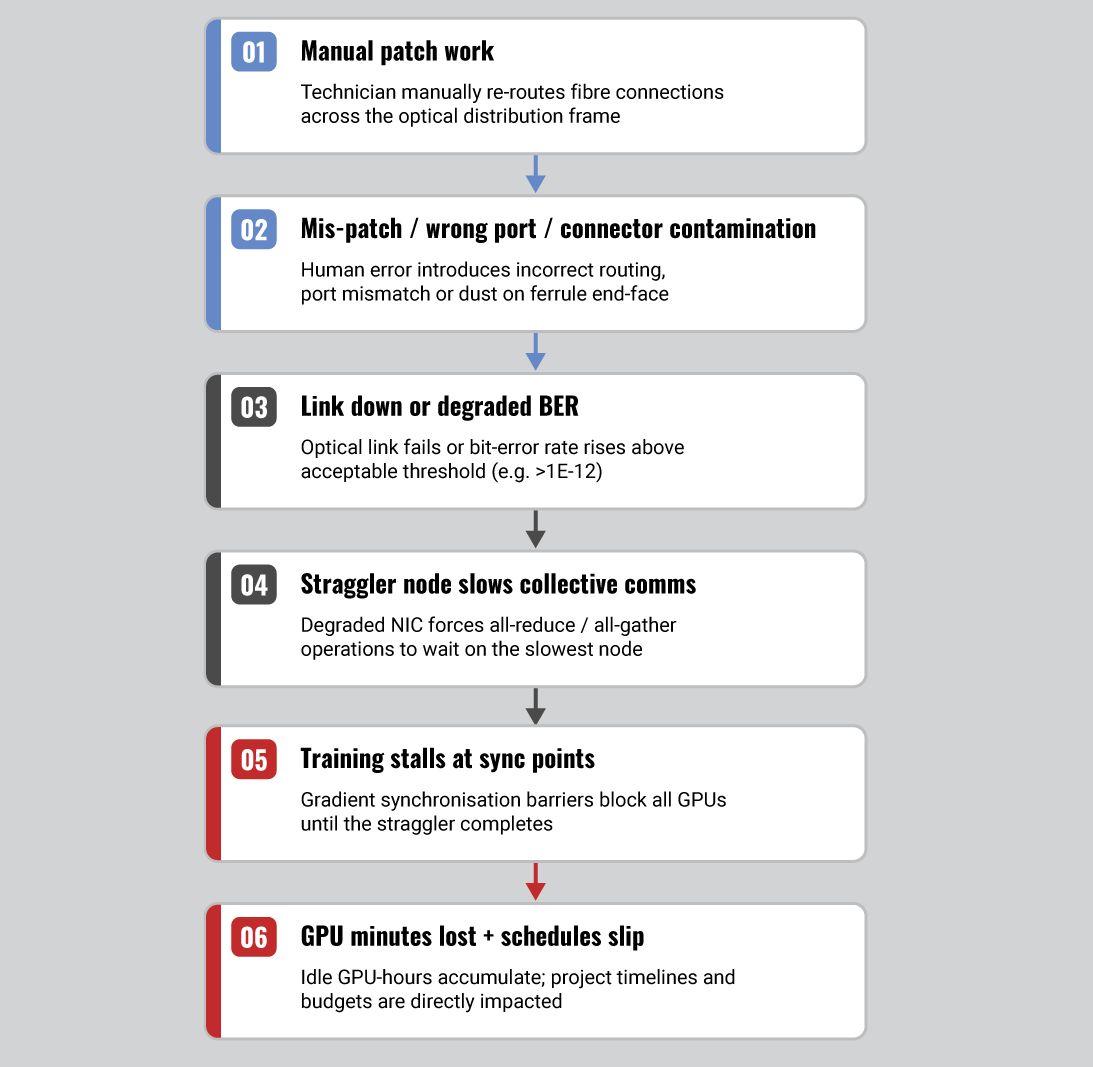

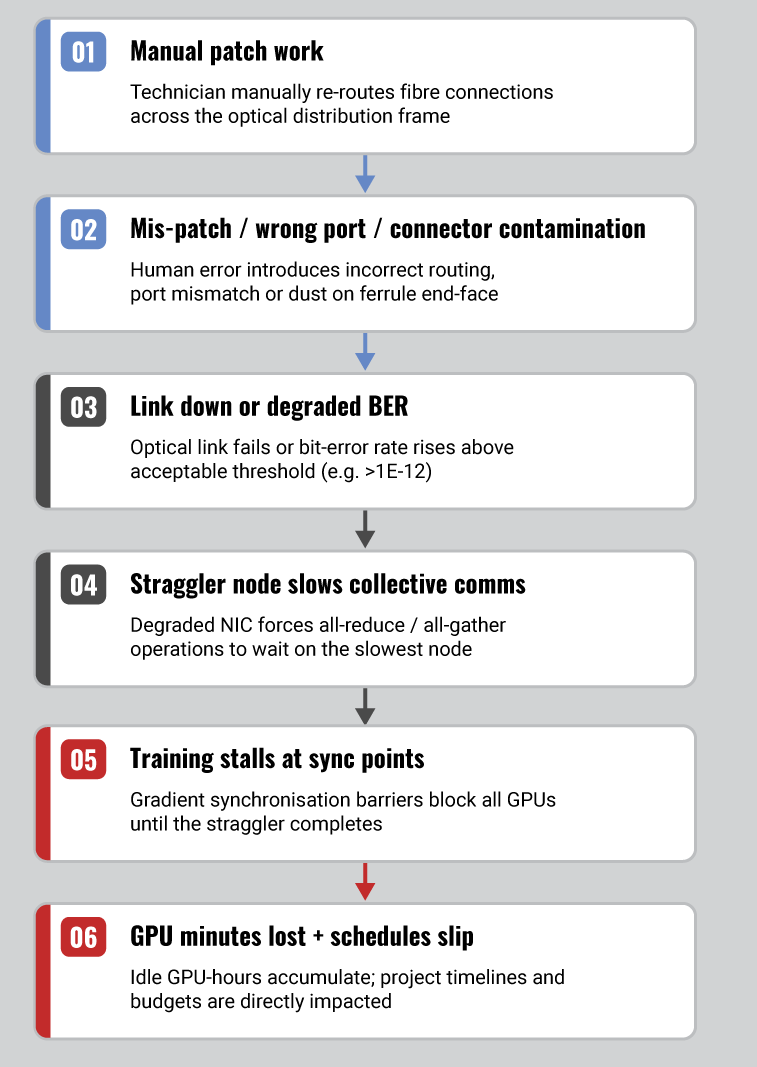

A single mis-patch can create path asymmetry, silent oversubscription, or a one-rack island. AI data center automation replaces manual verification with deterministic intent: the system connects the exact ports you request.

Distributed training waits for the slowest worker. One degraded link can force global pauses at synchronization points.

Idle cost per minute = (GPU count × internal $/GPU-hour) ÷ 60

When the stalled domain is hundreds or thousands of GPUs, minutes become budget and schedule risk.

AI labs reconfigure weekly, sometimes daily. With AI data center automation, change becomes controlled execution, not improvisation.

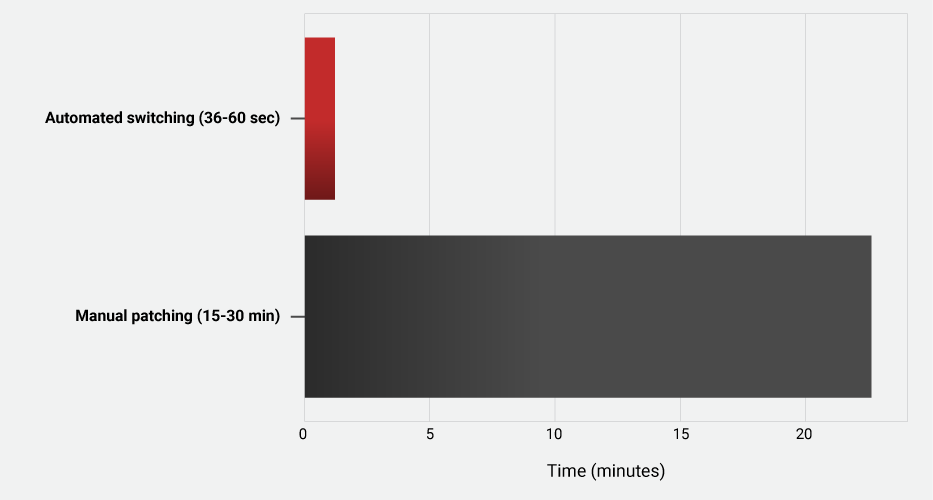

Robotic GPU cluster fiber switching with 36–60s switching can deliver 30–40× faster provisioning versus manual changes, alongside ~99% error reduction due to controlled workflows and audit logs. That is the operational meaning of zero-touch Layer 0 at 100kW density.

For AI data center automation to work at 100kW rack connectivity, the physical layer needs any-to-any connectivity, stable optics, and safe failure behavior.

A practical pattern is an automated cross connect tier for zero-touch Layer 0 operations:

In a connectorized design such as XENOptics XSOS 288 building blocks, target performance can hold to ≤0.8 dB insertion loss per automated cross connect when cleanliness and inspection are treated as first-class operations.

High-density fiber management has to match the port explosion of modern fabrics:

Automation should add minimal steady load: ~6 W idle, <0.5 W deep sleep, with power drawn primarily during switching actions.

Evaluate AI data center automation per MW of AI IT load.

Based on XENOptics deployment economics and operational cost modeling:

| Value driver (annual, per MW) | Annual value |

|---|---|

| Labor automation | $425,000 |

| Thermal energy | $115,000 |

| Risk mitigation | $135,000 |

| Total annual value | $675,000 |

That model yields 10.3-month payback and 102% IRR. In high-change AI environments, AI data center automation can also defer incremental facility spend that exists mainly to support manual work—extra cooling margin for technician comfort, access-driven layout compromises, and repeated change windows.

Assumptions vary by region, labor model, and incident cost. Treat this as a modeling template, not a guarantee.

As halls run warmer—published guidance allows inlet temperatures up to 35°C, and liquid cooling supports higher facility water temperatures—routine human patching becomes an avoidable exposure, reffering to Thermal Guidelines and Temperature Measurements in Data Centers, by Magnus Herrlin, Ph.D., Lawrence Berkeley National Laboratory.

AI data center automation strengthens operational control:

A rollout that works in production reduces risk and integrates with existing operations.

Prioritize: field-replaceable modules, no traffic interruption for adjacent paths, and carrier-class environmental readiness such as NEBS Level 3 and ETSI EN 300 019 Class 3.2 where required.

Major infrastructure vendors including Ericsson have highlighted the operational necessity of NEBS Level 3 compliance for distributed telecom environments.

At 100kW density, the "manual default" compounds as port counts and change velocity rise.

| Outcome Manual | 100kW rack | Automated (zero-touch Layer 0) |

|---|---|---|

| Change window | 15–30 minutes | 36–60 seconds |

| Human exposure | Frequent entries | Remote by default |

| Error rate (measured) | ~2.7% | ~0.02% |

| Auditability | Ticket-dependent | Per-cross-connect log |

| Remediation | Investigate + rework | Rollback or reroute in software |

Manual Patch Work >> Cascading Impact on GPU Training

If you're building 100kW racks, Layer 0 cannot remain an artisanal process. AI data center automation is how zero-touch Layer 0 becomes a standard operating mode. Book an AI Cluster Automation Assessment.

© 2018-2026 XENOptics. All Rights Reserved. Terms of Use.